2018-2019 HTDTWT Seminar Ana Teixeira Pinto: FEEDBACK FORMS - from month to month

Seminar 6: April

In the depoliticized atmosphere of post '89 a great deal of intellectual effort was devoted to conceptualizing emancipation in a manner that would involve freedom from the masses rather than freedom for the masses. In the resulting retreat from collective organization, mobilization and militancy, connectivity and the digital platforms, which afford it, were fetishized as a form of grass-roots, network enabled democracy. Millennials in particular are nudged to align their identities with start-ups and social media platforms (much as their parents did with the American Dream and its promises of upward mobility) and to misrecognize technological affordance as a form of temporal, and by extension social transcendence. But, as Jodi Dean notes, by virtue of its individuating effects the network has a nihilistic potential.

Big data, Shoshana Zuboff argues, “is not a technology or an inevitable technology effect. It is not an autonomous process [...] It originates in the social, and it is there that we must find it and know it. [...] Markets in behavioral control [are] composed of those who sell opportunities to influence behavior for profit and those who purchase such opportunities. [...] Google’ s Chief Economist Hal Varian celebrates such possibilities as new forms of contract, when in fact they represent the end of contracts. Google’ s rendering of information civilization replaces the rule of law and the necessity of social trust as the basis for human communities with a new life-world of rewards and punishments, stimulus and response.”

Reading:

Zuboff, Shoshana. "Big other: surveillance capitalism and the prospects of an information civilization." Journal of Information Technology 30 (2015): 75–89.

Dean, Jodi. "Why the Net is Not the Public Sphere." Constellations 10, nr. 1 (2003).

Bateson, Gregory. "A Theory of Play and Fantasy." Psychiatric Research Reports 2 (1955): 39-51.

Seminar 5: March

The most salient feature of the far-right movement, which became known as the alt-right is its relation with IT, rather than with the diminished expectations of the post-industrial working class. Within this new configuration of fascist ideology taking shape under the aegis of, and working in tandem with, neoliberal governance, whiteness is not simply an antipolitical concept, but the very source of antipolitics: the aspirational glue that leads those who are hurt economically to invest themselves nonetheless—libinally and symbollically. From this perspective the online cultural wars are a proxy for a greater battle around de-Westernization, Imperialism and loss of hegemony. Tech culture adopts the attitude of the hacker counterculture while aligning itself with the dominant class, and thus “opens up a poisonous conduit between the two.”(1) If every rise of Fascism bears witness to a failed revolution,(2) one could say that the rise of cryptofascist tendencies within the tech industry bears witness to the failures of the “digital revolution,” whose promises of a post-scarcity economy and socialized capital never came to pass.

After noting that scientific and religious outlooks are not behaviorally incongruous, sociologist Colin Campbell wrote about cults and cultic phenomena as examples of seekership, whose participants “have adopted a problem-solving perspective while defining conventional religious institutions and beliefs as inadequate.”(3) One of the most important ingredients of cultic culture is “deviant science and technology.” Those who “are impressed by the demonstrable superiority of science and as a consequence desire to hold a scientific outlook” are seldom “in a position to distinguish between what are orthodox and what are heterodox scientific views. They may, as a consequence end up believing in flying saucers and ESP because of the convincing scientific ‘evidence’.”(4) A tangled skein of wayward visions—white supremacists, masculinists, anti-feminists, seasteaders, transhumanists, Bitcoin freaks, Islamophobes, neo-monarchists, and anti-Semites—the new far right is unified by anti-political correctness, paranoia and, most importantly, seekership.

(1) Mark Greif, What Was the Hipster, New York Magazine, Oct 24, 2010. http://nymag.com/news/features/69129/.

(2) This statement is usually attributed to Walter Benjamin, though it is at best an elision of his arguments and not a direct quote.

(3) Colin Campbell, The Cult, the Cultic Milieu and Secularization (Lanham: AltaMira Press, 2002), 19.

(4) Ibid.

Reading:

Campbell, Colin. The Cult, the Cultic Milieu and Secularization. Lanham: AltaMira Press, 2002.

Jackson, Zakiyyah Iman. "Outer Worlds: The Persistence of Race in Movement Beyond the Human," GLQ: A Journal of Lesbian and Gay Studies, Queer Inhumanisms 21, nr. 2-3 (2015).

Seminar 4: February

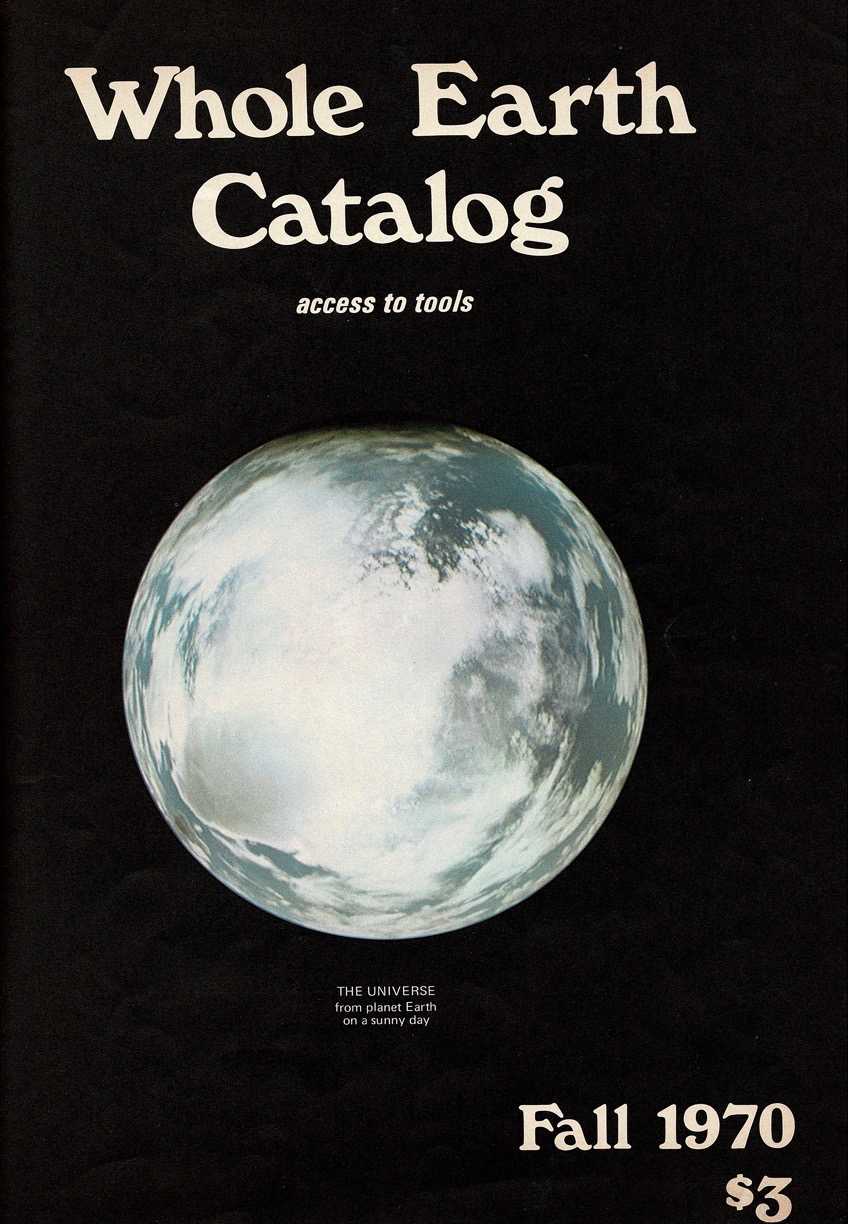

In the 1960s, two distinct anti-establishment movements emerged in America: the New Left and the New Communalists. Whilst the New Left sought to effect political change––mostly by organizing against the Vietnam War––the New Communalists felt that any engagement with politics, the state or government as such, was itself the problem. Between 1965 and 1972, a great many young, mostly white, Americans headed out of the cities and into the rural parts of northern California and built communes. The Whole Earth Catalog and the "WELL" (Whole Earth 'Lectronic Link) emerged from this communalist spirit, though, as Fred Turner argues, few have rigorously explored its roots in the American counterculture of the 1960s.

Another offshoot of the New Communalists, Biosphere 2, attracted a flurry of interest recently, due to the project's fortuitous connection to Steve Bannon, the former White House strategist whose short-lived tenure in the Trump administration was the subject of a great deal of scrutiny. But Bannon’s involvement was not the only adversity ever to afflict the ill-fated experiment. Biosphere 2 was the brainchild of John Allen, a New Age Visionary, who led a commune south of Santa Fe called “Synergy Ranch”. Whereas the Whole Earth Catalog or the "WELL", defined a virtual community, i.e. a “new form of technologically enabled social life,” Biosphere 2 was a real miniature world, meant to sustain a crew of eight human inhabitants (four men and for women), for a period of two years, inside its hermetic dome. Synergy Ranch had an apocalyptic ethos: worried about impending environmental collapse, Allen wanted to escape earth by building new colonies in space. But his appeals to venture beyond Earth, to build the ultimate version of the "good life" in outer space, leave unexamined the racial and ideological dimensions, which connote this beyond, as well as the peculiar combination of settler colonialism and white flight, which characterizes the Biosphere project. Rather more successful, Stewart Brand’s Whole Earth Catalog managed to reconcile the opposing pulls of technophilia and technophobia, in a synthesis held together by a mix of “systems theory and countercultural mysticism” (Turner: 2005).

Reading:

Turner, Fred. “Where the Counterculture Met the New Economy: The WELL and the Origins of Virtual Community.” Technology and Culture 46 (2005): 485–512.

Seminar 3: January

Samsung’s Smart TVs come with a fine-print warning: if you enable voice recognition, your spoken words will be ‘captured and transmitted to a third party’, so you might not want to discuss personal or sensitive information in front of your TV. Even if voice recognition is disabled, Samsung will still collect your metadata – what and when you watch, and including facial recognition – though you won’t be able to use their interactive features. The SmartSeries Bluetooth toothbrush from Oral-B, a Procter & Gamble company, connects to a brushing app in your smartphone, which keeps a detailed record of your dental hygiene. The company advertises that you can share such data with your dentist, though, in a privatized health market, it’s more likely the purpose of such technology is to share data with your insurance company.

The cultural logic of the information age is predicated on an inversion of the gaze: within this fusion of surveillance and control, the screen, as Jonathan Crary has noted, ‘is both the object of attention and (the object) capable of monitoring, recording and cross-referencing attentive behaviour.’1 Data processing – whose reaches span the NSA, credit rating agencies, health insurance providers, up to the sorting algorithms used by Google or Instagram – is predictive, modeling future actions on previous behaviour. As such, as Orit Halpern argued in her 2015 book Beautiful Data, data processing implies a model of temporality in which the past is a standing reserve of information, waiting to be mined.

Reading:

Stalder, Felix. “The Fight over Transparency: From a Hierarchical to a Horizontal Organization.” Open! Platform for Art, Culture and the Public Domain. Nov 18, 2011. https://www.onlineopen.org/the-fight-over-transparency#contributor-bio-0

Additional Reading:

Jameson, Frederick. "Cognitive Mapping." 1990.

Buck-Morss, Susan. "Envisioning Capital: Political Economy on Display." Critical Inquiry 21, no. 2 (1995).

Galloways, Alexander. "Are Some Things Unrepresentable?" Theory, Culture & Society 28, no. 7-8 (2011): 85-102.

Seminar 2: December

When, in 1913, John B. Watson gave his inaugural address at Columbia University, “Psychology as the Behaviourist Views It,”(1) he described psychology as a discipline whose “theoretical goal is the prediction and control of behaviour.” Strongly influenced by Ivan Pavlov’s study of conditioned reflexes, Watson aimed to anchor psychology firmly in the field of the natural sciences. By black-boxing mentation or internal states behaviorism could operate on observable behaviour alone, creating a psychology completely devoid of subjectivity.

Following Watson’s lead, American psychologists began to treat all forms of learning as skills—from “maze running in rats […] to the growth of a personality pattern.”(2) For the behaviourist movement, both animal and human behaviour could be entirely explained in terms of reflexes, stimulus-response associations, and the effects of reinforcing agents upon them. Burrhus Frederic Skinner researched how specific external stimuli affected learning using a method that he termed “operant conditioning”. While classic—or Pavlovian—conditioning simply pairs a stimulus and a response, in operant conditioning, the animal’s behavior is initially spontaneous, but the feedback that it elicits reinforces or inhibits the recurrence of certain actions.

Like Watson’s, Skinner’s method black-boxes the animal’s internal states to operate on observable behaviour alone. From this perspective an animal is just like a machine because it can be made to behave like a machine. But this equivalence also invites its reversal: producing an identical type of behaviour could also be construed as creating an identical being.

(1) This was the first of a series of lectures that later became known as the “Behaviourist Manifesto”.

(2) John A. Mills, Control—A History of Behavioral Psychology (New York: NYU Press, 1998), 84.

Seminar 1: November

C: Will X please tell me the length of his or her hair?

– A. M. Turing, Computing Machinery and Intelligence, 1950

In 1951, Alan Turing described a thought experiment, which became widely known as the ‘Turing Test’, though Turing himself termed it the ‘Imitation Game’. The Imitation Game was a game conceived to tackle the issue of artificial intelligence, at that time known as machine intelligence, but the first experiment does not involve machines. Instead, Turing asks the reader to imagine two rooms, connected via computer screen and keyboard to a third room, in which a person who will be the game arbiter sits. In the first room one finds a man, in the second a woman, who are hidden from view but able to communicate via the computer terminal. The judge’s job is to determine which player is the man and which is the woman, whereas the woman’s job is to deceive the judge into misidentifying her as the male player. The second experiment involves a variation of the same game, this time round replacing one player with a machine. Now the judge’s job is to decide which of the contestants is human. If he gets it wrong oftentimes, the computer must be a passable simulation of a human being and hence, intelligent.

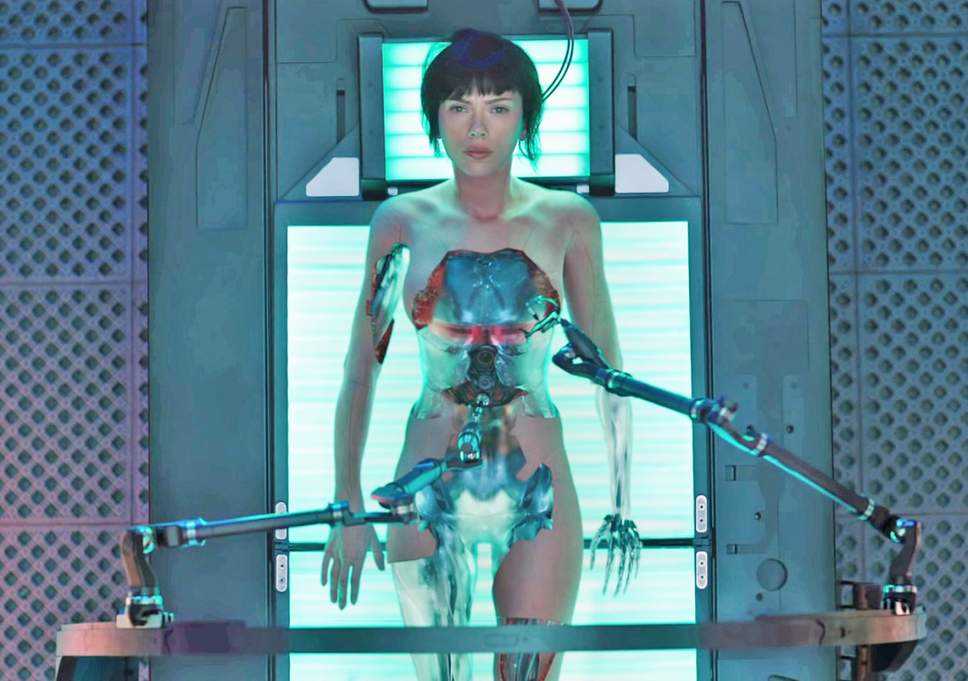

The Imitation Game is usually misunderstood as proof that the converse is also true, that if a computer passes the test it must be a passable simulation of intelligence and, hence, human. Whilst the queer dimension of Turing’s thought experiment was overlooked, the gendering of technology became a central theme in pop culture and pulp science. In spite of the feminist appropriation of the cyborg body as a means to depart from gender dichotomies, the cyborg became a reactionary figure (humanoid robots or commercial personifications of AI are gendered according to traditional roles: military technology is male, service technology female) predicated on the relation between the male gaze and the female body rather than on a double articulation of difference – sexual difference and machinic difference.

mandatory reading:

Hayles, N. Katherine. “Boundary Disputes: Homeostasis, Reflexivity, and the Foundations of Cybernetics.” Configurations 2, no. 3 (1994): 441-467.

recommended reading:

Halpern, Orit. “Inhuman Vision.” Journal of the New Media Caucus (Fall 2014). http://median.newmediacaucus.org/art-infrastructures-information/inhuman-vision/.

Jackson, Zakiyyah Iman. “Outer Worlds: The Persistence of Race in Movement ‘Beyond the Human’.” GLQ: A Journal of Lesbian and Gay Studies 21, no. 2-3 (2015): 215–46.

Halpern, Orit. “Schizophrenic Techniques: Cybernetics, the Human Sciences, and the Double Bind” S&F Online 10, no. 3 (2012). http://sfonline.barnard.edu/feminist-media-theory/schizophrenic-techniques-cybernetics-the-human-sciences-and-the-double-bind/.

Theweleit, Klaus. Male Fantasies. Minneapolis: University of Minnesota Press, 1987.